Research conducted by the federal government in 2015 found that only 41 percent of U.S. adults with a mental health condition in the previous year had gotten treatment. That dismal treatment rate has to do with cost, logistics, stigma, and being poorly matched with a professional.

Research conducted by the federal government in 2015 found that only 41 percent of U.S. adults with a mental health condition in the previous year had gotten treatment. That dismal treatment rate has to do with cost, logistics, stigma, and being poorly matched with a professional.

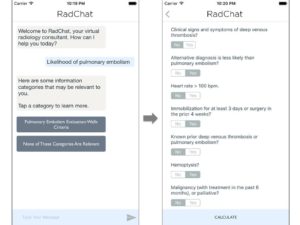

Chatbots are meant to remove or diminish these barriers. Creators of mobile apps for depression and anxiety, among other mental health conditions, have argued the same thing, but research found that very few of the apps are based on rigorous science or are even tested to see if they work.

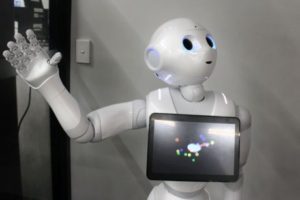

That’s why Alison Darcy, a clinical psychologist at Stanford University and CEO and founder of Woebot wants to set a higher standard for chatbots. Darcy co-authored a small study published this week in the Journal of Medical Internet Research that demonstrated Woebot can reduce symptoms of depression in two weeks.

Woebot presumably does this in part by drawing on techniques from cognitive behavioral therapy (CBT), an effective form of therapy that focuses on understanding the relationship between thoughts and behavior. He’s not there to heal trauma or old psychological wounds.

“We don’t make great claims about this technology,” Darcy says. “The secret sauce is how thoughtful [Woebot] is as a CBT therapist. He has a set of core principles that override everything he does.”

His personality is also partly modeled on a charming combination of Spock and Kermit the Frog.

Jonathan Gratch, director for virtual human research at the USC Institute for Creative Technologies, has studied customer service chatbots extensively and is skeptical of the idea that one could effectively intuit our emotional well-being.

“State-of-the-art natural language processing is getting increasingly good at individual words, but not really deeply understanding what you’re saying,” he says.

The risk of using a chatbot for your mental health is manifold, Gratch adds.

Darcy acknowledges Woebot’s limitations. He’s only for those 18 and over. If your mood hasn’t improved after six weeks of exchanges, he’ll prompt you to talk about getting a “higher level of care.” Upon seeing signs of suicidal thoughts or behavior, Woebot will provide information for crisis phone, text, and app resources. The best way to describe Woebot, Darcy says, is probably as “gateway therapy.”

“I have to believe that applications like this can address a lot of people’s needs.”

Source: Mashable

/https%3A%2F%2Fblueprint-api-production.s3.amazonaws.com%2Fuploads%2Fcard%2Fimage%2F388992%2F569934fa-43ad-4108-a757-8e73129256c9.jpg)

Spivack, the futurist, pictures people partnering with lifelong virtual companions. You’ll give an infant an intelligent toy that learns about her and tutors her and grows along with her. “It starts out as a little cute stuffed animal,” he says, “but it evolves into something that lives in the cloud and they access on their phone. And then by 2050 or whatever, maybe it’s a brain implant.” Among the many questions raised by such a scenario, Spivack asks: “Who owns our agents? Are they a property of Google?” Could our oldest friends be revoked or reprogrammed at will? And without our trusted assistants, will we be helpless?

Spivack, the futurist, pictures people partnering with lifelong virtual companions. You’ll give an infant an intelligent toy that learns about her and tutors her and grows along with her. “It starts out as a little cute stuffed animal,” he says, “but it evolves into something that lives in the cloud and they access on their phone. And then by 2050 or whatever, maybe it’s a brain implant.” Among the many questions raised by such a scenario, Spivack asks: “Who owns our agents? Are they a property of Google?” Could our oldest friends be revoked or reprogrammed at will? And without our trusted assistants, will we be helpless?