Jordi Ribas, left, and Kristina Behr, right, showcased Microsoft’s AI advances at an event Wednesday. Photo by Dan DeLong.

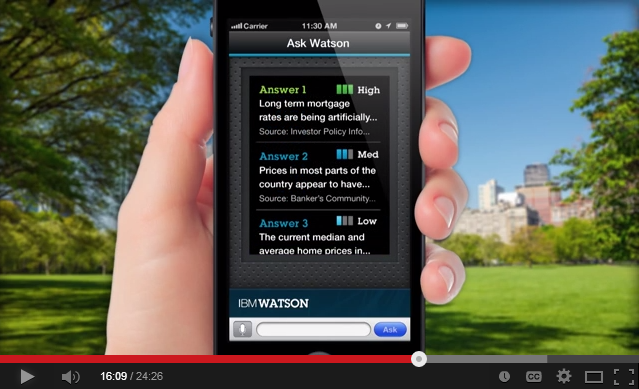

These days, people want more intelligent answers: Maybe they’d like to gather the pros and cons of a certain exercise plan or figure out whether the latest Marvel movie is worth seeing. They might even turn to their favorite search tool with only the vaguest of requests, such as, “I’m hungry.”

When people make requests like that, they don’t just want a list of websites. They might want a personalized answer, such as restaurant recommendations based on the city they are traveling in. Or they might want a variety of answers, so they can get different perspectives on a topic. They might even need help figuring out the right question to ask.

At a Microsoft event in San Francisco on Wednesday, Microsoft executives showcased a number of advances in its Bing search engine, Cortana intelligent assistant and Microsoft Office 365 productivity tools that use artificial intelligence to help people get more nuanced information and assist with more complex needs.

“AI has come a long way in the ability to find information, but making sense of that information is the real challenge,” said Kristina Behr, a partner design and planning program manager with Microsoft’s Artificial Intelligence and Research group.

Microsoft demonstrated some of the most recent AI-driven advances in intelligent search that are aimed at giving people richer, more useful information.

Another new, AI-driven advance in Bing is aimed at getting people multiple viewpoints on a search query that might be more subjective.

For example, if you ask Bing “is cholesterol bad,” you’ll see two different perspectives on that question.

That’s part of Microsoft’s effort to acknowledge that sometimes a question doesn’t have a clear black and white answer.

“As Bing, what we want to do is we want to provide the best results from the overall web. We want to be able to find the answers and the results that are the most comprehensive, the most relevant and the most trustworthy,” Ribas said.

“Often people are seeking answers that go beyond something that is a mathematical equation. We want to be able to frame those opinions and articulate them in a way that’s also balanced and objective.”

Source: Microsoft