Much appreciation for the published paper ‘acknowledgements’ by Joel Janhonen, in the November 21, 2023 “AI and Ethics” journal published by Springer.

Research

Artificial intelligence pioneer says throw it all away and start again

Geoffrey Hinton harbors doubts about AI’s current workhorse. (Johnny Guatto / University of Toronto)

In 1986, Geoffrey Hinton co-authored a paper that, three decades later, is central to the explosion of artificial intelligence.

But Hinton says his breakthrough method should be dispensed with, and a new path to AI found.

… he is now “deeply suspicious” of back-propagation, the workhorse method that underlies most of the advances we are seeing in the AI field today, including the capacity to sort through photos and talk to Siri.

“My view is throw it all away and start again”

Hinton said that, to push materially ahead, entirely new methods will probably have to be invented. “Max Planck said, ‘Science progresses one funeral at a time.’ The future depends on some graduate student who is deeply suspicious of everything I have said.”

Hinton suggested that, to get to where neural networks are able to become intelligent on their own, what is known as “unsupervised learning,” “I suspect that means getting rid of back-propagation.”

“I don’t think it’s how the brain works,” he said. “We clearly don’t need all the labeled data.”

Source: Axios

Inside Microsoft’s Artificial Intelligence Comeback

[Yoshua Bengio, one of the three intellects who shaped the deep learning that now dominates artificial intelligence, has never been one to take sides. But Bengio has recently chosen to sign on with Microsoft. In this WIRED article he explains why.]

“We don’t want one or two companies, which I will not name, to be the only big players in town for AI,” he says, raising his eyebrows to indicate that we both know which companies he means. One eyebrow is in Menlo Park; the other is in Mountain View. “It’s not good for the community. It’s not good for people in general.”

That’s why Bengio has recently chosen to forego his neutrality, signing on with Microsoft.

Yes, Microsoft. His bet is that the former kingdom of Windows alone has the capability to establish itself as AI’s third giant. It’s a company that has the resources, the data, the talent, and—most critically—the vision and culture to not only realize the spoils of the science, but also push the field forward.

Just as the internet disrupted every existing business model and forced a re-ordering of industry that is just now playing out, artificial intelligence will require us to imagine how computing works all over again.

In this new landscape, computing is ambient, accessible, and everywhere around us. To draw from it, we need a guide—a smart conversationalist who can, in plain written or spoken form, help us navigate this new super-powered existence. Microsoft calls it Cortana.

Because Cortana comes installed with Windows, it has 145 million monthly active users, according to the company. That’s considerably more than Amazon’s Alexa, for example, which can be heard on fewer than 10 million Echoes. But unlike Alexa, which primarily responds to voice, Cortana also responds to text and is embedded in products that many of us already have. Anyone who has plugged a query into the search box at the top of the toolbar in Windows has used Cortana.

Eric Horvitz wants Microsoft to be more than simply a place where research is done. He wants Microsoft Research to be known as a place where you can study the societal and social influences of the technology.

This will be increasingly important as Cortana strives to become, to the next computing paradigm, what your smartphone is today: the front door for all of your computing needs. Microsoft thinks of it as an agent that has all your personal information and can interact on your behalf with other agents.

If Cortana is the guide, then chatbots are Microsoft’s fixers. They are tiny snippets of AI-infused software that are designed to automate one-off tasks you used to do yourself, like making a dinner reservation or completing a banking transaction.

So far, North American teens appear to like chatbot friends every bit as much as Chinese teens, according to the data. On average, they spend 10 hours talking back and forth with Zo. As Zo advises its adolescent users on crushes and commiserates about pain-in-the-ass parents, she is becoming more elegant in her turns of phrase—intelligence that will make its way into Cortana and Microsoft’s bot tools.

It’s all part of one strategy to help ensure that in the future, when you need a computing assist–whether through personalized medicine, while commuting in a self-driving car, or when trying to remember the birthdays of all your nieces and nephews–Microsoft will be your assistant of choice.

Source: Wired for the full in-depth article

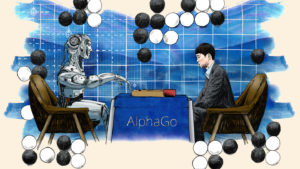

DeepMind’s social agenda plays to its AI strengths

DeepMind’s researchers have in common a clearly defined if lofty mission:

DeepMind’s researchers have in common a clearly defined if lofty mission:

to crack human intelligence and recreate it artificially.

Today, the goal is not just to create a powerful AI to play games better than a human professional, but to use that knowledge “for large-scale social impact”, says DeepMind’s other co-founder, Mustafa Suleyman, a former conflict-resolution negotiator at the UN.

“To solve seemingly intractable problems in healthcare, scientific research or energy, it is not enough just to assemble scores of scientists in a building; they have to be untethered from the mundanities of a regular job — funding, administration, short-term deadlines — and left to experiment freely and without fear.”

“if you’re interested in advancing the research as fast as possible, then you need to give [scientists] the space to make the decisions based on what they think is right for research, not for whatever kind of product demand has just come in.”

“Our research team today is insulated from any short-term pushes or pulls, whether it be internally at Google or externally.

We want to have a big impact on the world, but our research has to be protected, Hassabis says.

“We showed that you can make a lot of advances using this kind of culture. I think Google took notice of that and they’re shifting more towards this kind of longer-term research.”

Source: Financial Times

New Research Center to Explore Ethics of Artificial Intelligence

The Chimp robot, built by a Carnegie Mellon team, took third place in a competition held by DARPA last year. The school is starting a research center focused on the ethics of artificial intelligence. Credit Chip Somodevilla/Getty Images

Carnegie Mellon University plans to announce on Wednesday that it will create a research center that focuses on the ethics of artificial intelligence.

The ethics center, called the K&L Gates Endowment for Ethics and Computational Technologies, is being established at a time of growing international concern about the impact of A.I. technologies.

“We are at a unique point in time where the technology is far ahead of society’s ability to restrain it”

Subra Suresh, Carnegie Mellon’s president

The new center is being created with a $10 million gift from K&L Gates, an international law firm headquartered in Pittsburgh.

Peter J. Kalis, chairman of the law firm, said the potential impact of A.I. technology on the economy and culture made it essential that as a society we make thoughtful, ethical choices about how the software and machines are used.

“Carnegie Mellon resides at the intersection of many disciplines,” he said. “It will take a synthesis of the best thinking of all of these disciplines for society to define the ethical constraints on the emerging A.I. technologies.”

Source: NY Times

Why we can’t trust ‘blind big data’ to cure the world’s diseases

Once upon a time a former editor of WIRED, Chris Anderson, … envisaged how scientists would take the ever expanding ocean of data, send a torrent of bits and bytes into a great hopper, then crank the handles of huge computers that run powerful statistical algorithms to discern patterns where science cannot.

Once upon a time a former editor of WIRED, Chris Anderson, … envisaged how scientists would take the ever expanding ocean of data, send a torrent of bits and bytes into a great hopper, then crank the handles of huge computers that run powerful statistical algorithms to discern patterns where science cannot.

In short, Anderson dreamt of the day when scientists no longer had to think.

Eight years later, the deluge is truly upon us. Some 90 percent of the data currently in the world was created in the last two years … and there are high hopes that big data will pave the way for a revolution in medicine.

But we need big thinking more than ever before.

Today’s data sets, though bigger than ever, still afford us an impoverished view of living things.

It takes a bewildering amount of data to capture the complexities of life.

The usual response is to put faith in machine learning, such as artificial neural networks. But no matter their ‘depth’ and sophistication, these methods merely fit curves to available data. we do not predict tomorrow’s weather by averaging historic records of that day’s weather

… here are other limitations, not least that data are not always reliable (“most published research findings are false,” as famously reported by John Ioannidis in PLOS Medicine). Bodies are dynamic and ever-changing, while datasets often only give snapshots, and are always retrospective.

Source: Wired

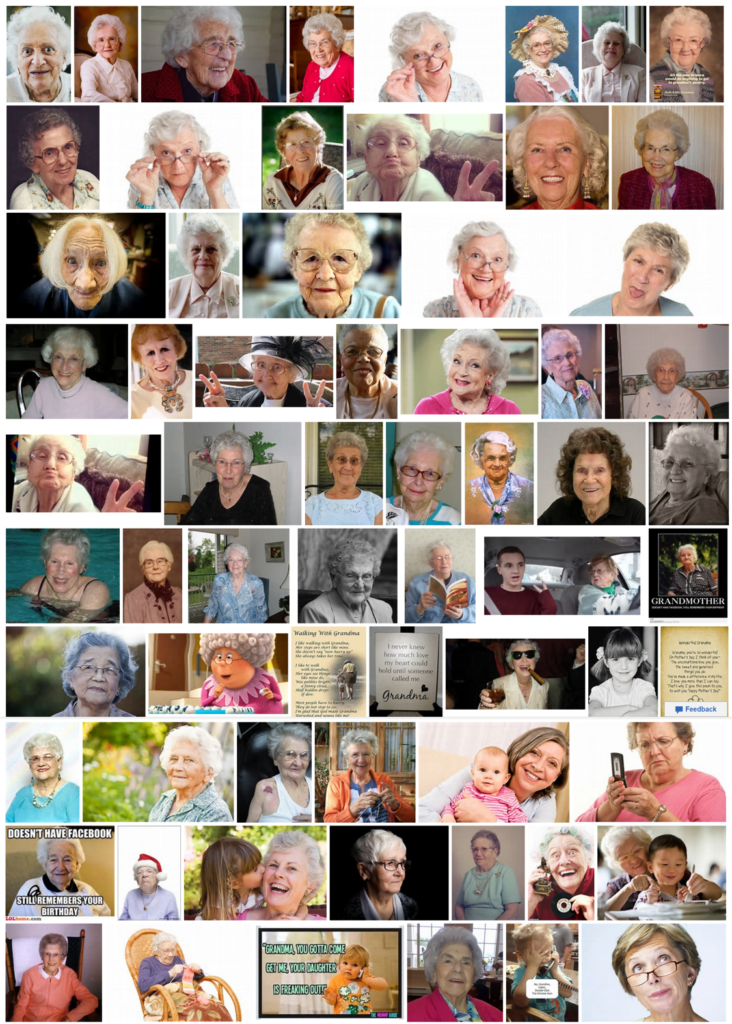

Grandma? Now you can see the bias in the data …

“Just type the word grandma in your favorite search engine image search and you will see the bias in the data, in the picture that is returned … you will see the race bias.” — Fei-Fei Li, Professor of Computer Science, Stanford University, speaking at the White House Frontiers Conference

Google image search for Grandma

Bing image search for Grandma

Artificial Intelligence Will Be as Biased and Prejudiced as Its Human Creators

The optimism around modern technology lies in part in the belief that it’s a democratizing force—one that isn’t bound by the petty biases and prejudices that humans have learned over time. But for artificial intelligence, that’s a false hope, according to new research, and the reason is boneheadedly simple: Just as we learn our biases from the world around us, AI will learn its biases from us.

The optimism around modern technology lies in part in the belief that it’s a democratizing force—one that isn’t bound by the petty biases and prejudices that humans have learned over time. But for artificial intelligence, that’s a false hope, according to new research, and the reason is boneheadedly simple: Just as we learn our biases from the world around us, AI will learn its biases from us.

Source: Pacific Standard

“Big data need big theory too”

This published paper written by Peter V. Coveney, Edward R. Dougherty, Roger R. Highfield

Abstract

The current interest in big data, machine learning and data analytics has generated the widespread impression that such methods are capable of solving most problems without the need for conventional scientific methods of inquiry. Interest in these methods is intensifying, accelerated by the ease with which digitized data can be acquired in virtually all fields of endeavour, from science, healthcare and cybersecurity to economics, social sciences and the humanities. In multiscale modelling, machine learning appears to provide a shortcut to reveal correlations of arbitrary complexity between processes at the atomic, molecular, meso- and macroscales.

Here, we point out the weaknesses of pure big data approaches with particular focus on biology and medicine, which fail to provide conceptual accounts for the processes to which they are applied. No matter their ‘depth’ and the sophistication of data-driven methods, such as artificial neural nets, in the end they merely fit curves to existing data.

Source: The Royal Society Publishing

GE developing an “empathy aid” computer intelligence to improve quality of life

Peter Tu – Principal Scientist at GE Global Research on creating intelligent machines

Toyota Invests $1 Billion in Artificial Intelligence Research Center in California

Breaking News, Nov. 6:

Gill Pratt, a roboticist who will oversee Toyota’s new research laboratory in the United States, at a news conference Friday in Tokyo. (Yuya Shino/Reuters)

Toyota, the Japanese auto giant, on Friday announced a five-year, $1 billion research and development effort headquartered here. As planned, the compound would be one of the largest research laboratories in Silicon Valley.

Conceived as a research facility bridging basic science and commercial engineering, it will be organized as a new company to be named Toyota Research Institute. Toyota will initially have a laboratory adjacent to Stanford University and another near M.I.T. in Cambridge, Mass.

Toyota plans to hire 200 scientists for its artificial intelligence research center.

The new center will initially focus on artificial intelligence and robotics technologies and will explore how humans move both outdoors and indoors, including technologies intended to help the elderly.

When the center begins operating in January, it will prioritize technologies that make driving safer for humans rather than completely replacing them. That approach is in stark contrast with existing research efforts being pursued by Google and Uber to create self-driving cars.

“We want to create cars that are both safer and incredibly fun to drive,” Dr. Pratt said. Rather than completely removing driving from the equation, he described a collection of sensors and software that will serve as a “guardian angel,” protecting human drivers.

In September, when Dr. Pratt joined Toyota, the company announced an initial artificial intelligence research effort committing $50 million in funding to the computer science departments of both Stanford and M.I.T. He said the initiative was intended to turn one of the world’s most successful carmakers into one of the world’s top software developers.

In addition to focusing on navigation technologies, the new research corporation will also apply artificial intelligence technologies to Toyota’s factory automation systems, Dr. Pratt said.

Source: NY Times

Meet Pineapple, NKU’s newest artificial intelligence

Pineapple will be used for the next three years for research into social robotics

“Robots are  getting more intelligent, more sociable. People are treating robots like humans! People apply humor and social norms to robots,” Dr. [Austin] Lee said. “Even when you think logically there’s no way, no reason, to do that; it’s just a machine without a heart. But because people attach human attributes to robots, I think a robot can be an effective persuader.”

getting more intelligent, more sociable. People are treating robots like humans! People apply humor and social norms to robots,” Dr. [Austin] Lee said. “Even when you think logically there’s no way, no reason, to do that; it’s just a machine without a heart. But because people attach human attributes to robots, I think a robot can be an effective persuader.”

Source: The Northerner

The Rise of the Robot Therapist

Social robots appear to be particularly effective in helping participants with behaviour problems develop better control over their behaviour

In recent years, we’ve seen a rise in different interactive technologies and new ways of using them to treat various mental problems. Among other things, this includes online, computer-based, and even virtual reality approaches to cognitive-behavioural therapy. But what about using robots to provide treatment and/or emotional support?

A new article published in Review of General Psychology provides an overview of some of the latest advances in robotherapy and what we can expect in the future. Written by Cristina Costecu and David O. David of Romania’s Babes-Bolyai University and Bram Vanderborgt of Vrije Universiteit in Belgium, the article covers different studies showing how robotics are transforming personal care.

What they found was a fairly strong treatment effect for using robots in therapy. Compared to the participants getting robotic therapy, 69 percent of the 581 study participants getting alternative treatment performed more poorly overall.

As for individuals with autism, research has already shown that they can be even more responsive to treatment using social robots than with human therapists due to their difficulty with social cues.

Though getting children with autism to participate in treatment is often frustrating for human therapists, they often respond extremely well to robot-based therapy to help them become more independent.

Source: Psychology Today

USC Study: Virtual assistants “way better” than talking to a person?

“A new USC study suggests that patients are more willing to disclose personal information to virtual humans than actual ones, in large part because computers lack the proclivity to look down on people the way another human might. “We know that developing a rapport and feeling free of judgment are two important factors that affect a person’s willingness to disclose personal information,” said co-author Jonathan Gratch, director of virtual humans research at ICT and a professor in USC’s Department of Computer Science. “The virtual character delivered on both these fronts and that is what makes this a particularly valuable tool for obtaining information people might feel sensitive about sharing.”

Source Tanya Abrams – USC

Contact: USC press release

Original Research Abstract for for “It’s only a computer: Virtual humans increase willingness to disclose” by Gale M. Lucas, Jonathan Gratch, Aisha King, and Louis-Philippe Morency in Computers in Human Behavior. Published online July 9 2014 doi:10.1016/j.chb.2014.04.043

PL – Now, read my post HERE as I “connect the dots” between this topic (above) to the shortage of real professionals in behavioral health services. Kudos to those creating AI applications to advance our machines. But the time has come to use AI to advance our humanity.