First, the site’s artificial intelligence (AI) chooses a story based on what’s popular on the internet right now. Once it picks a topic, it looks at more than a thousand news sources to gather details. Left-leaning sites, right-leaning sites – the AI looks at them all.

First, the site’s artificial intelligence (AI) chooses a story based on what’s popular on the internet right now. Once it picks a topic, it looks at more than a thousand news sources to gather details. Left-leaning sites, right-leaning sites – the AI looks at them all.

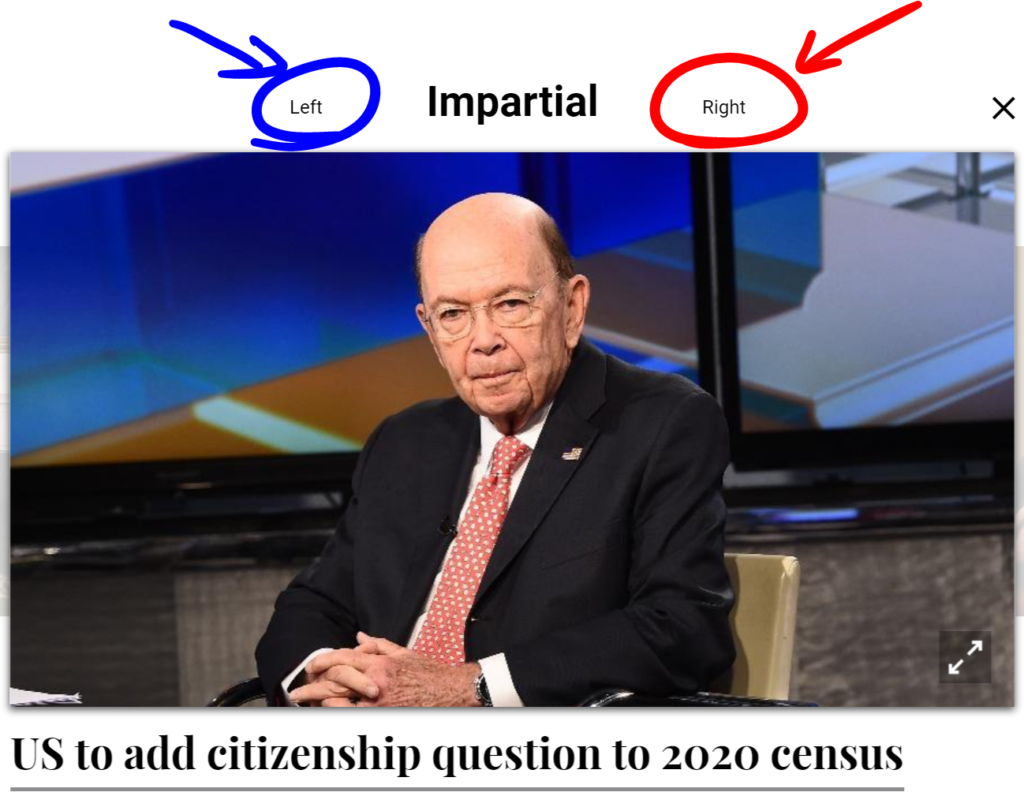

Then, the AI writes its own “impartial” version of the story based on what it finds (sometimes in as little as 60 seconds). This take on the news contains the most basic facts, with the AI striving to remove any potential bias. The AI also takes into account the “trustworthiness” of each source, something Knowhere’s co-founders preemptively determined. This ensures a site with a stellar reputation for accuracy isn’t overshadowed by one that plays a little fast and loose with the facts.

For some of the more political stories, the AI produces two additional versions labeled “Left” and “Right.” Those skew pretty much exactly how you’d expect from their headlines:

- Impartial: “US to add citizenship question to 2020 census”

- Left: “California sues Trump administration over census citizenship question”

- Right: “Liberals object to inclusion of citizenship question on 2020 census”

Some controversial but not necessarily political stories receive “Positive” and “Negative” spins:

- Impartial: “Facebook scans things you send on messenger, Mark Zuckerberg admits”

- Positive: “Facebook reveals that it scans Messenger for inappropriate content”

- Negative: “Facebook admits to spying on Messenger, ‘scanning’ private images and links”

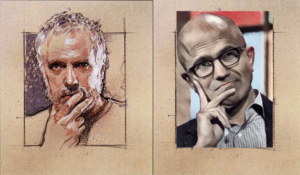

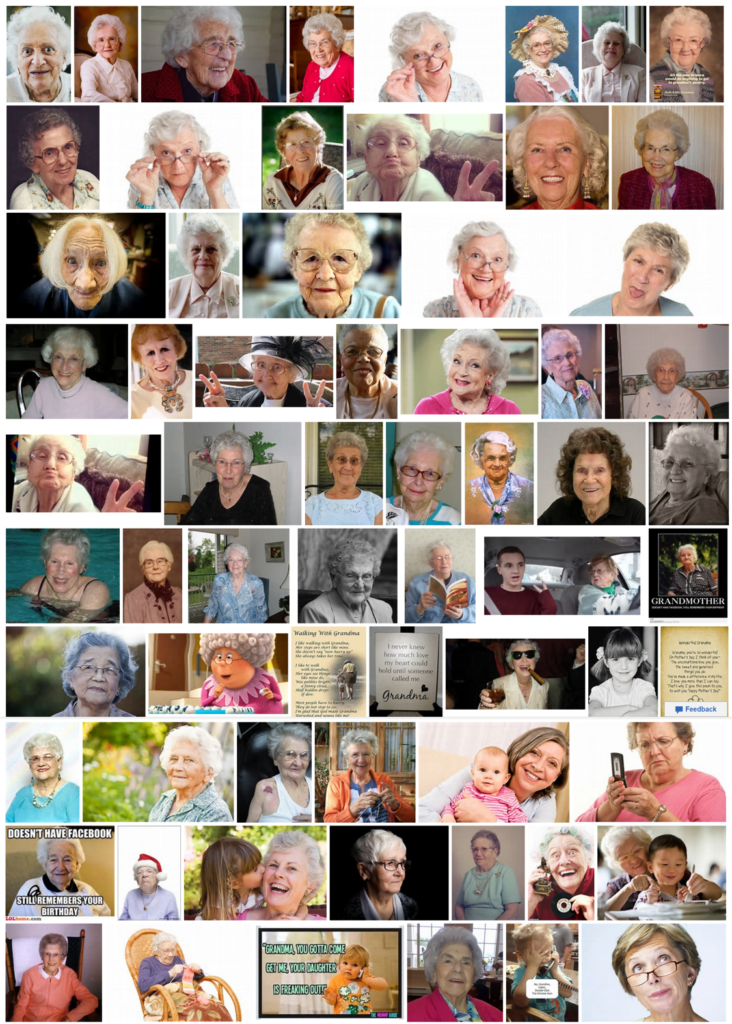

Even the images used with the stories occasionally reflect the content’s bias. The “Positive” Facebook story features CEO Mark Zuckerberg grinning, while the “Negative” one has him looking like his dog just died.

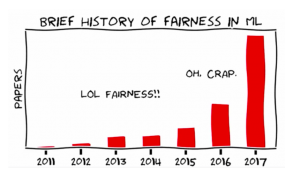

So, impartial stories written by AI. Pretty neat? Sure. But society changing? We’ll probably need more than a clever algorithm for that.

Source: Futurism