Much appreciation for the published paper ‘acknowledgements’ by Joel Janhonen, in the November 21, 2023 “AI and Ethics” journal published by Springer.

AI Research

Life at the Intersection of AI and Society

Edits from a Microsoft podcast with Dr. Ece Kamar, a senior researcher in the Adaptive Systems and Interaction Group at Microsoft Research.

I’m very interested in the complementarity between machine intelligence and human intelligence and what kind of value can be generated from using both of them to make daily life better.

We try to build systems that can interact with people, that can work with people and that can be beneficial for people. Our group has a big human component, so we care about modelling the human side. And we also work on machine-learning decision-making algorithms that can make decisions appropriately for the domain they were designed for.

My main area is the intersection between humans and AI.

we are actually at an important point in the history of AI where a lot of critical AI systems are entering the real world and starting to interact with people. So, we are at this inflection point where, whatever AI does, and the way we build AI, have consequences for the society we live in.

So, let’s look for what can augment human intelligence, what can make human intelligence better.” And that’s what my research focuses on. I really look for the complementarity in intelligences, and building these experience that can, in the future, hopefully, create super-human experiences.

So, a lot of the work I do focuses on two big parts: one is how we can build AI systems that can provide value for humans in their daily tasks and making them better. But also thinking about how humans may complement AI systems.

And when we look at our AI practices, it is actually very data-dependent these days … However, data collection is not a real science. We have our insights, we have our assumptions and we do data collection that way. And that data is not always the perfect representation of the world. This creates blind spots. When our data is not the right representation of the world and it’s not representing everything we care about, then our models cannot learn about some of the important things.

“AI is developed by people, with people, for people.”

And when I talk about building AI for people, a lot of the systems we care about are human-driven. We want to be useful for human.

We are thinking about AI algorithms that can bias their decisions based on race, gender, age. They can impact society and there are a lot of areas like judicial decision-making that touches law. And also, for every vertical, we are building these systems and I think we should be working with the domain experts from these verticals. We need to talk to educators. We need to talk to doctors. We need to talk to people who understand what that domain means and all the special considerations we should be careful about.

So, I think if we can understand what this complementary means, and then build AI that can use the power of AI to complement what humans are good at and support them in things that they want to spend time on, I think that is the beautiful future I foresee from the collaboration of humans and machines.

Source: Microsoft Research Podcast

AI poses one of the “greatest tests of leadership for our time”

“But it is a test that I am confident we can meet”

Thereas May, Prime Minister UK

The prime minister is to say she wants the UK to lead the world in deciding how artificial intelligence can be deployed in a safe and ethical manner.

Theresa May will say at the World Economic Forum in Davos that a new advisory body, previously announced in the Autumn Budget, will co-ordinate efforts with other countries.

In addition, she will confirm that the UK will join the Davos forum’s own council on artificial intelligence.

But others may have stronger claims.

Earlier this week, Google picked France as the base for a new research centre dedicated to exploring how AI can be applied to health and the environment.

Facebook also announced it was doubling the size of its existing AI lab in Paris, while software firm SAP committed itself to a 2bn euro ($2.5bn; £1.7bn) investment into the country that will include work on machine learning.

Meanwhile, a report released last month by the Eurasia Group consultancy suggested that the US and China are engaged in a “two-way race for AI dominance”.

It predicted Beijing would take the lead thanks to the “insurmountable” advantage of offering its companies more flexibility in how they use data about its citizens.

she is expected to say that the UK is recognised as first in the world for its preparedness to “bring artificial intelligence into government”.

Source: BBC

What’s Bigger Than Fire and Electricity? Artificial Intelligence – Google

Google CEO Sundar Pichai believes artificial intelligence could have “more profound” implications for humanity than electricity or fire, according to recent comments.

Google CEO Sundar Pichai believes artificial intelligence could have “more profound” implications for humanity than electricity or fire, according to recent comments.

Pichai also warned that the development of artificial intelligence could pose as much risk as that of fire if its potential is not harnessed correctly.

“AI is one of the most important things humanity is working on” Pichai said in an interview with MSNBC and Recode

“My point is AI is really important, but we have to be concerned about it,” Pichai said. “It’s fair to be worried about it—I wouldn’t say we’re just being optimistic about it— we want to be thoughtful about it. AI holds the potential for some of the biggest advances we’re going to see.”

Source: Newsweek

DeepMind’s new AI ethics unit

DeepMind made this announcement Oct 2017

Google-owned DeepMind has announced the formation of a major new AI research unit comprised of full-time staff and external advisors

As we hand over more of our lives to artificial intelligence systems, keeping a firm grip on their ethical and societal impact is crucial.

DeepMind Ethics & Society (DMES), a unit comprised of both full-time DeepMind employees and external fellows, is the company’s latest attempt to scrutinise the societal impacts of the technologies it creates.

DMES will work alongside technologists within DeepMind and fund external research based on six areas: privacy transparency and fairness; economic impacts; governance and accountability; managing AI risk; AI morality and values; and how AI can address the world’s challenges.

Its aim, according to DeepMind, is twofold: to help technologists understand the ethical implications of their work and help society decide how AI can be beneficial.

“We want these systems in production to be our highest collective selves. We want them to be most respectful of human rights, we want them to be most respectful of all the equality and civil rights laws that have been so valiantly fought for over the last sixty years.” [Mustafa Suleyman]

Source: Wired

DeepMind Ethics and Society hallmark of a change in attitude

The unit, called DeepMind Ethics and Society, is not the AI Ethics Board that DeepMind was promised when it agreed to be acquired by Google in 2014. That board, which was convened by January 2016, was supposed to oversee all of the company’s AI research, but nothing has been heard of it in the three-and-a-half years since the acquisition. It remains a mystery who is on it, what they discuss, or even whether it has officially met.

The unit, called DeepMind Ethics and Society, is not the AI Ethics Board that DeepMind was promised when it agreed to be acquired by Google in 2014. That board, which was convened by January 2016, was supposed to oversee all of the company’s AI research, but nothing has been heard of it in the three-and-a-half years since the acquisition. It remains a mystery who is on it, what they discuss, or even whether it has officially met.

DeepMind Ethics and Society is also not the same as DeepMind Health’s Independent Review Panel, a third body set up by the company to provide ethical oversight – in this case, of its specific operations in healthcare.

Nor is the new research unit the Partnership on Artificial Intelligence to Benefit People and Society, an external group founded in part by DeepMind and chaired by the company’s co-founder Mustafa Suleyman. That partnership, which was also co-founded by Facebook, Amazon, IBM and Microsoft, exists to “conduct research, recommend best practices, and publish research under an open licence in areas such as ethics, fairness and inclusivity”.

Nonetheless, its creation is the hallmark of a change in attitude from DeepMind over the past year, which has seen the company reassess its previously closed and secretive outlook. It is still battling a wave of bad publicity started when it partnered with the Royal Free in secret, bringing the app Streams to active use in the London hospital without being open to the public about what data was being shared and how.

The research unit also reflects an urgency on the part of many AI practitioners to get ahead of growing concerns on the part of the public about how the new technology will shape the world around us.

Source: The Guardian

Artificial intelligence pioneer says throw it all away and start again

Geoffrey Hinton harbors doubts about AI’s current workhorse. (Johnny Guatto / University of Toronto)

In 1986, Geoffrey Hinton co-authored a paper that, three decades later, is central to the explosion of artificial intelligence.

But Hinton says his breakthrough method should be dispensed with, and a new path to AI found.

… he is now “deeply suspicious” of back-propagation, the workhorse method that underlies most of the advances we are seeing in the AI field today, including the capacity to sort through photos and talk to Siri.

“My view is throw it all away and start again”

Hinton said that, to push materially ahead, entirely new methods will probably have to be invented. “Max Planck said, ‘Science progresses one funeral at a time.’ The future depends on some graduate student who is deeply suspicious of everything I have said.”

Hinton suggested that, to get to where neural networks are able to become intelligent on their own, what is known as “unsupervised learning,” “I suspect that means getting rid of back-propagation.”

“I don’t think it’s how the brain works,” he said. “We clearly don’t need all the labeled data.”

Source: Axios

Google Debuts PAIR Initiative to Humanize #AI

We’re announcing the People + AI Research initiative (PAIR) which brings together researchers across Google to study and redesign the ways people interact with AI systems.

We’re announcing the People + AI Research initiative (PAIR) which brings together researchers across Google to study and redesign the ways people interact with AI systems.

The goal of PAIR is to focus on the “human side” of AI: the relationship between users and technology, the new applications it enables, and how to make it broadly inclusive.

PAIR’s research is divided into three areas, based on different user needs:

- Engineers and researchers: AI is built by people. How might we make it easier for engineers to build and understand machine learning systems? What educational materials and practical tools do they need?

- Domain experts: How can AI aid and augment professionals in their work? How might we support doctors, technicians, designers, farmers, and musicians as they increasingly use AI?

- Everyday users: How might we ensure machine learning is inclusive, so everyone can benefit from breakthroughs in AI? Can design thinking open up entirely new AI applications? Can we democratize the technology behind AI?

Many designers and academics have started exploring human/AI interaction. Their work inspires us; we see community-building and research support as an essential part of our mission.

Focusing on the human element in AI brings new possibilities into view. We’re excited to work together to invent and explore what’s possible.

Source: Google blog

Inside Microsoft’s Artificial Intelligence Comeback

[Yoshua Bengio, one of the three intellects who shaped the deep learning that now dominates artificial intelligence, has never been one to take sides. But Bengio has recently chosen to sign on with Microsoft. In this WIRED article he explains why.]

“We don’t want one or two companies, which I will not name, to be the only big players in town for AI,” he says, raising his eyebrows to indicate that we both know which companies he means. One eyebrow is in Menlo Park; the other is in Mountain View. “It’s not good for the community. It’s not good for people in general.”

That’s why Bengio has recently chosen to forego his neutrality, signing on with Microsoft.

Yes, Microsoft. His bet is that the former kingdom of Windows alone has the capability to establish itself as AI’s third giant. It’s a company that has the resources, the data, the talent, and—most critically—the vision and culture to not only realize the spoils of the science, but also push the field forward.

Just as the internet disrupted every existing business model and forced a re-ordering of industry that is just now playing out, artificial intelligence will require us to imagine how computing works all over again.

In this new landscape, computing is ambient, accessible, and everywhere around us. To draw from it, we need a guide—a smart conversationalist who can, in plain written or spoken form, help us navigate this new super-powered existence. Microsoft calls it Cortana.

Because Cortana comes installed with Windows, it has 145 million monthly active users, according to the company. That’s considerably more than Amazon’s Alexa, for example, which can be heard on fewer than 10 million Echoes. But unlike Alexa, which primarily responds to voice, Cortana also responds to text and is embedded in products that many of us already have. Anyone who has plugged a query into the search box at the top of the toolbar in Windows has used Cortana.

Eric Horvitz wants Microsoft to be more than simply a place where research is done. He wants Microsoft Research to be known as a place where you can study the societal and social influences of the technology.

This will be increasingly important as Cortana strives to become, to the next computing paradigm, what your smartphone is today: the front door for all of your computing needs. Microsoft thinks of it as an agent that has all your personal information and can interact on your behalf with other agents.

If Cortana is the guide, then chatbots are Microsoft’s fixers. They are tiny snippets of AI-infused software that are designed to automate one-off tasks you used to do yourself, like making a dinner reservation or completing a banking transaction.

So far, North American teens appear to like chatbot friends every bit as much as Chinese teens, according to the data. On average, they spend 10 hours talking back and forth with Zo. As Zo advises its adolescent users on crushes and commiserates about pain-in-the-ass parents, she is becoming more elegant in her turns of phrase—intelligence that will make its way into Cortana and Microsoft’s bot tools.

It’s all part of one strategy to help ensure that in the future, when you need a computing assist–whether through personalized medicine, while commuting in a self-driving car, or when trying to remember the birthdays of all your nieces and nephews–Microsoft will be your assistant of choice.

Source: Wired for the full in-depth article

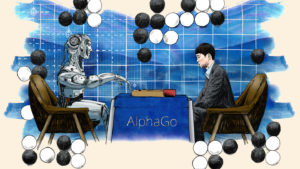

DeepMind’s social agenda plays to its AI strengths

DeepMind’s researchers have in common a clearly defined if lofty mission:

DeepMind’s researchers have in common a clearly defined if lofty mission:

to crack human intelligence and recreate it artificially.

Today, the goal is not just to create a powerful AI to play games better than a human professional, but to use that knowledge “for large-scale social impact”, says DeepMind’s other co-founder, Mustafa Suleyman, a former conflict-resolution negotiator at the UN.

“To solve seemingly intractable problems in healthcare, scientific research or energy, it is not enough just to assemble scores of scientists in a building; they have to be untethered from the mundanities of a regular job — funding, administration, short-term deadlines — and left to experiment freely and without fear.”

“if you’re interested in advancing the research as fast as possible, then you need to give [scientists] the space to make the decisions based on what they think is right for research, not for whatever kind of product demand has just come in.”

“Our research team today is insulated from any short-term pushes or pulls, whether it be internally at Google or externally.

We want to have a big impact on the world, but our research has to be protected, Hassabis says.

“We showed that you can make a lot of advances using this kind of culture. I think Google took notice of that and they’re shifting more towards this kind of longer-term research.”

Source: Financial Times

12 Observations About Artificial Intelligence From The O’Reilly AI Conference

Bloggers: Here’s a few excepts from a long but very informative review. (The best may be last.)

Bloggers: Here’s a few excepts from a long but very informative review. (The best may be last.)

The conference was organized by Ben Lorica and Roger Chen with Peter Norvig and Tim O-Reilly acting as honorary program chairs.

For a machine to act in an intelligent way, said [Yann] LeCun, it needs “to have a copy of the world and its objective function in such a way that it can roll out a sequence of actions and predict their impact on the world.” To do this, machines need to understand how the world works, learn a large amount of background knowledge, perceive the state of the world at any given moment, and be able to reason and plan.

Peter Norvig explained the reasons why machine learning is more difficult than traditional software: “Lack of clear abstraction barriers”—debugging is harder because it’s difficult to isolate a bug; “non-modularity”—if you change anything, you end up changing everything; “nonstationarity”—the need to account for new data; “whose data is this?”—issues around privacy, security, and fairness; lack of adequate tools and processes—exiting ones were developed for traditional software.

AI must consider culture and context—“training shapes learning”

“Many of the current algorithms have already built in them a country and a culture,” said Genevieve Bell, Intel Fellow and Director of Interaction and Experience Research at Intel. As today’s smart machines are (still) created and used only by humans, culture and context are important factors to consider in their development.

Both Rana El Kaliouby (CEO of Affectiva, a startup developing emotion-aware AI) and Aparna Chennapragada (Director of Product Management at Google) stressed the importance of using diverse training data—if you want your smart machine to work everywhere on the planet it must be attuned to cultural norms.

“Training shapes learning—the training data you put in determines what you get out,” said Chennapragada. And it’s not just culture that matters, but also context

The £10 million Leverhulme Centre for the Future of Intelligence will explore “the opportunities and challenges of this potentially epoch-making technological development,” namely AI. According to The Guardian, Stephen Hawking said at the opening of the Centre,

“We spend a great deal of time studying history, which, let’s face it, is mostly the history of stupidity. So it’s a welcome change that people are studying instead the future of intelligence.”

Gary Marcus, professor of psychology and neural science at New York University and cofounder and CEO of Geometric Intelligence,

“a lot of smart people are convinced that deep learning is almost magical—I’m not one of them … A better ladder does not necessarily get you to the moon.”

Tom Davenport added, at the conference: “Deep learning is not profound learning.”

AI changes how we interact with computers—and it needs a dose of empathy

AI continues to be possibly hampered by a futile search for human-level intelligence while locked into a materialist paradigm

Maybe, just maybe, our minds are not computers and computers do not resemble our brains? And maybe, just maybe, if we finally abandon the futile pursuit of replicating “human-level AI” in computers, we will find many additional–albeit “narrow”–applications of computers to enrich and improve our lives?

Gary Marcus complained about research papers presented at the Neural Information Processing Systems (NIPS) conference, saying that they are like alchemy, adding a layer or two to a neural network, “a little fiddle here or there.” Instead, he suggested “a richer base of instruction set of basic computations,” arguing that “it’s time for genuinely new ideas.”

Is it possible that this paradigm—and the driving ambition at its core to play God and develop human-like machines—has led to the infamous “AI Winter”? And that continuing to adhere to it and refusing to consider “genuinely new ideas,” out-of-the-dominant-paradigm ideas, will lead to yet another AI Winter?

Source: Forbes

MIT makes breakthrough in morality-proofing artificial intelligence

Researchers at MIT are investigating ways of making artificial neural networks more transparent in their decision-making.

As they stand now, artificial neural networks are a wonderful tool for discerning patterns and making predictions. But they also have the drawback of not being terribly transparent. The beauty of an artificial neural network is its ability to sift through heaps of data and find structure within the noise.

This is not dissimilar from the way we might look up at clouds and see faces amidst their patterns. And just as we might have trouble explaining to someone why a face jumped out at us from the wispy trails of a cirrus cloud formation, artificial neural networks are not explicitly designed to reveal what particular elements of the data prompted them to decide a certain pattern was at work and make predictions based upon it.

We tend to want a little more explanation when human lives hang in the balance — for instance, if an artificial neural net has just diagnosed someone with a life-threatening form of cancer and recommends a dangerous procedure. At that point, we would likely want to know what features of the person’s medical workup tipped the algorithm in favor of its diagnosis.

MIT researchers Lei, Barzilay, and Jaakkola designed a neural network that would be forced to provide explanations for why it reached a certain conclusion.

Source: Extremetech

How Deep Learning is making AI prejudiced

Bloggers note: The authors of this research paper show what they refer to as “machine prejudice” and how it derives so fundamentally from human culture.

“Concerns about machine prejudice are now coming to the fore–concerns that our historic biases and prejudices are being reified in machines,” they write. “Documented cases of automated prejudice range from online advertising (Sweeney, 2013) to criminal sentencing (Angwin et al., 2016).”

Following are a few excerpts:

“Artificial intelligence and machine learning are in a period of astounding growth. However, there are concerns that these technologies may be used, either with or without intention, to perpetuate the prejudice and unfairness that unfortunately characterizes many human institutions. Here we show for the first time that human-like semantic biases result from the application of standard machine learning to ordinary language—the same sort of language humans are exposed to every day.

Discussion

“We show for the first time that if AI is to exploit via our language the vast knowledge that culture has compiled, it will inevitably inherit human-like prejudices. In other words, if AI learns enough about the properties of language to be able to understand and produce it, it also acquires cultural associations that can be offensive, objectionable, or harmful. These are much broader concerns than intentional discrimination, and possibly harder to address.

Awareness is better than blindness

“… where AI is partially constructed automatically by machine learning of human culture, we may also need an analog of human explicit memory and deliberate actions, that can be trained or programmed to avoid the expression of prejudice.

“Of course, such an approach doesn’t lend itself to a straightforward algorithmic formulation. Instead it requires a long-term, interdisciplinary research program that includes cognitive scientists and ethicists. …”

Click here to download the pdf of the report

Semantics derived automatically from language corpora necessarily contain human biases

Aylin Caliskan-Islam , Joanna J. Bryson, and Arvind Narayanan

1 Princeton University

2 University of Bath

Draft date August 31, 2016.

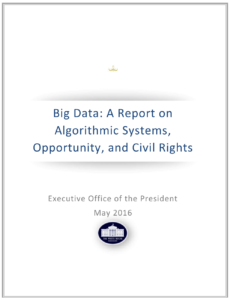

Civil Rights and Big Data

Blogger’s note: We’ve posted several articles on the bias and prejudice inherent in big data, which with machine learning results in “machine prejudice,” all of which impacts humans when they interact with intelligent agents.

Blogger’s note: We’ve posted several articles on the bias and prejudice inherent in big data, which with machine learning results in “machine prejudice,” all of which impacts humans when they interact with intelligent agents.

Apparently, as far back as May 2014, the Executive Office of the President started issuing reports on the potential in “Algorithmic Systems” for “encoding discrimination in automated decisions”. The most recent report of May 2016 addressed two additional challenges:

1) Challenges relating to data used as inputs to an algorithm;

2) Challenges related to the inner workings of the algorithm itself.

Here are two excerpts:

The Obama Administration’s Big Data Working Group released reports on May 1, 2014 and February 5, 2015. These reports surveyed the use of data in the public and private sectors and analyzed opportunities for technological innovation as well as privacy challenges. One important social justice concern the 2014 report highlighted was “the potential of encoding discrimination in automated decisions”—that is, that discrimination may “be the inadvertent outcome of the way big data technologies are structured and used.”

To avoid exacerbating biases by encoding them into technological systems, we need to develop a principle of “equal opportunity by design”—designing data systems that promote fairness and safeguard against discrimination from the first step of the engineering process and continuing throughout their lifespan.

Download the report here: Whitehouse.gov

References:

https://www.whitehouse.gov/blog/2016/10/12/administrations-report-future-artificial-intelligence

http://www.frontiersconference.org/

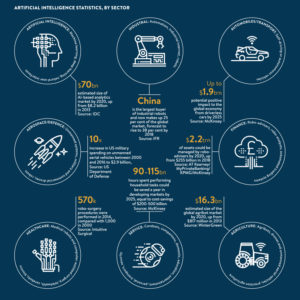

Sixty-two percent of organizations will be using artificial intelligence (AI) by 2018, says Narrative Science

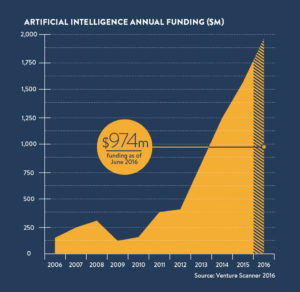

Artificial intelligence received $974m of funding as of June 2016 and this figure will only rise with the news that 2016 saw more AI patent applications than ever before.

Artificial intelligence received $974m of funding as of June 2016 and this figure will only rise with the news that 2016 saw more AI patent applications than ever before.

This year’s funding is set to surpass 2015’s total and CB Insights suggests that 200 AI-focused companies have raised nearly $1.5 billion in equity funding.

AI isn’t limited to the business sphere, in fact the personal robot market, including ‘care-bots’, could reach $17.4bn by 2020.

Care-bots could prove to be a fantastic solution as the world’s populations see an exponential rise in elderly people. Japan is leading the way with a third of government budget on robots devoted to the elderly.

Source: Raconteur: The rise of artificial intelligence in 6 charts

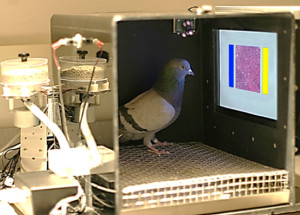

Pigeons diagnose breast cancer, could teach AI to read medical images

After years of education and training, physicians can sometimes struggle with the interpretation of microscope slides and mammograms. [Richard] Levenson, a pathologist who studies artificial intelligence for image analysis and other applications in biology and medicine, believes there is considerable room for enhancing the process.

“While new technologies are constantly being designed to enhance image acquisition, processing, and display, these potential advances need to be validated using trained observers to monitor quality and reliability,” Levenson said. “This is a difficult, time-consuming, and expensive process that requires the recruitment of clinicians as subjects for these relatively mundane tasks. “Pigeons’ sensitivity to diagnostically salient features in medical images suggest that they can provide reliable feedback on many variables at play in the production, manipulation, and viewing of these diagnostically crucial tools, and can assist researchers and engineers as they continue to innovate.”

“Pigeons do just as well as humans in categorizing digitized slides and mammograms of benign and malignant human breast tissue,” said Levenson.

Source: KurzwielAI.net

Toyota Invests $1 Billion in Artificial Intelligence Research Center in California

Breaking News, Nov. 6:

Gill Pratt, a roboticist who will oversee Toyota’s new research laboratory in the United States, at a news conference Friday in Tokyo. (Yuya Shino/Reuters)

Toyota, the Japanese auto giant, on Friday announced a five-year, $1 billion research and development effort headquartered here. As planned, the compound would be one of the largest research laboratories in Silicon Valley.

Conceived as a research facility bridging basic science and commercial engineering, it will be organized as a new company to be named Toyota Research Institute. Toyota will initially have a laboratory adjacent to Stanford University and another near M.I.T. in Cambridge, Mass.

Toyota plans to hire 200 scientists for its artificial intelligence research center.

The new center will initially focus on artificial intelligence and robotics technologies and will explore how humans move both outdoors and indoors, including technologies intended to help the elderly.

When the center begins operating in January, it will prioritize technologies that make driving safer for humans rather than completely replacing them. That approach is in stark contrast with existing research efforts being pursued by Google and Uber to create self-driving cars.

“We want to create cars that are both safer and incredibly fun to drive,” Dr. Pratt said. Rather than completely removing driving from the equation, he described a collection of sensors and software that will serve as a “guardian angel,” protecting human drivers.

In September, when Dr. Pratt joined Toyota, the company announced an initial artificial intelligence research effort committing $50 million in funding to the computer science departments of both Stanford and M.I.T. He said the initiative was intended to turn one of the world’s most successful carmakers into one of the world’s top software developers.

In addition to focusing on navigation technologies, the new research corporation will also apply artificial intelligence technologies to Toyota’s factory automation systems, Dr. Pratt said.

Source: NY Times