Bloggers: Here’s a few excepts from a long but very informative review. (The best may be last.)

Bloggers: Here’s a few excepts from a long but very informative review. (The best may be last.)

The conference was organized by Ben Lorica and Roger Chen with Peter Norvig and Tim O-Reilly acting as honorary program chairs.

For a machine to act in an intelligent way, said [Yann] LeCun, it needs “to have a copy of the world and its objective function in such a way that it can roll out a sequence of actions and predict their impact on the world.” To do this, machines need to understand how the world works, learn a large amount of background knowledge, perceive the state of the world at any given moment, and be able to reason and plan.

Peter Norvig explained the reasons why machine learning is more difficult than traditional software: “Lack of clear abstraction barriers”—debugging is harder because it’s difficult to isolate a bug; “non-modularity”—if you change anything, you end up changing everything; “nonstationarity”—the need to account for new data; “whose data is this?”—issues around privacy, security, and fairness; lack of adequate tools and processes—exiting ones were developed for traditional software.

AI must consider culture and context—“training shapes learning”

“Many of the current algorithms have already built in them a country and a culture,” said Genevieve Bell, Intel Fellow and Director of Interaction and Experience Research at Intel. As today’s smart machines are (still) created and used only by humans, culture and context are important factors to consider in their development.

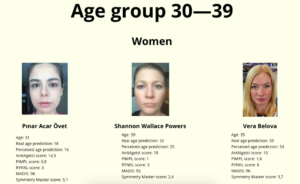

Both Rana El Kaliouby (CEO of Affectiva, a startup developing emotion-aware AI) and Aparna Chennapragada (Director of Product Management at Google) stressed the importance of using diverse training data—if you want your smart machine to work everywhere on the planet it must be attuned to cultural norms.

“Training shapes learning—the training data you put in determines what you get out,” said Chennapragada. And it’s not just culture that matters, but also context

The £10 million Leverhulme Centre for the Future of Intelligence will explore “the opportunities and challenges of this potentially epoch-making technological development,” namely AI. According to The Guardian, Stephen Hawking said at the opening of the Centre,

“We spend a great deal of time studying history, which, let’s face it, is mostly the history of stupidity. So it’s a welcome change that people are studying instead the future of intelligence.”

Gary Marcus, professor of psychology and neural science at New York University and cofounder and CEO of Geometric Intelligence,

“a lot of smart people are convinced that deep learning is almost magical—I’m not one of them … A better ladder does not necessarily get you to the moon.”

Tom Davenport added, at the conference: “Deep learning is not profound learning.”

AI changes how we interact with computers—and it needs a dose of empathy

AI continues to be possibly hampered by a futile search for human-level intelligence while locked into a materialist paradigm

Maybe, just maybe, our minds are not computers and computers do not resemble our brains? And maybe, just maybe, if we finally abandon the futile pursuit of replicating “human-level AI” in computers, we will find many additional–albeit “narrow”–applications of computers to enrich and improve our lives?

Gary Marcus complained about research papers presented at the Neural Information Processing Systems (NIPS) conference, saying that they are like alchemy, adding a layer or two to a neural network, “a little fiddle here or there.” Instead, he suggested “a richer base of instruction set of basic computations,” arguing that “it’s time for genuinely new ideas.”

Is it possible that this paradigm—and the driving ambition at its core to play God and develop human-like machines—has led to the infamous “AI Winter”? And that continuing to adhere to it and refusing to consider “genuinely new ideas,” out-of-the-dominant-paradigm ideas, will lead to yet another AI Winter?

Source: Forbes