Much appreciation for the published paper ‘acknowledgements’ by Joel Janhonen, in the November 21, 2023 “AI and Ethics” journal published by Springer.

Training AI

Trouble with #AI Bias – Kate Crawford

This article attempts to bring our readers to Kate’s brilliant Keynote speech at NIPS 2017. It talks about different forms of bias in Machine Learning systems and the ways to tackle such problems.

This article attempts to bring our readers to Kate’s brilliant Keynote speech at NIPS 2017. It talks about different forms of bias in Machine Learning systems and the ways to tackle such problems.

The rise of Machine Learning is every bit as far reaching as the rise of computing itself.

A vast new ecosystem of techniques and infrastructure are emerging in the field of machine learning and we are just beginning to learn their full capabilities. But with the exciting things that people can do, there are some really concerning problems arising.

Forms of bias, stereotyping and unfair determination are being found in machine vision systems, object recognition models, and in natural language processing and word embeddings. High profile news stories about bias have been on the rise, from women being less likely to be shown high paying jobs to gender bias and object recognition datasets like MS COCO, to racial disparities in education AI systems.

What is bias?

Bias is a skew that produces a type of harm.

Where does bias come from?

Commonly from Training data. It can be incomplete, biased or otherwise skewed. It can draw from non-representative samples that are wholly defined before use. Sometimes it is not obvious because it was constructed in a non-transparent way. In addition to human labeling, other ways that human biases and cultural assumptions can creep in ending up in exclusion or overrepresentation of subpopulation. Case in point: stop-and-frisk program data used as training data by an ML system. This dataset was biased due to systemic racial discrimination in policing.

Harms of allocation

Majority of the literature understand bias as harms of allocation. Allocative harm is when a system allocates or withholds certain groups, an opportunity or resource. It is an economically oriented view primarily. Eg: who gets a mortgage, loan etc.

Allocation is immediate, it is a time-bound moment of decision making. It is readily quantifiable. In other words, it raises questions of fairness and justice in discrete and specific transactions.

Harms of representation

It gets tricky when it comes to systems that represent society but don’t allocate resources. These are representational harms. When systems reinforce the subordination of certain groups along the lines of identity like race, class, gender etc.

It is a long-term process that affects attitudes and beliefs. It is harder to formalize and track. It is a diffused depiction of humans and society. It is at the root of all of the other forms of allocative harm.

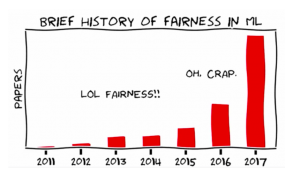

What can we do to tackle these problems?

- Start working on fairness forensics

- Test our systems: eg: build pre-release trials to see how a system is working across different populations

- How do we track the life cycle of a training dataset to know who built it and what the demographics skews might be in that dataset

- Start taking interdisciplinarity seriously

- Working with people who are not in our field but have deep expertise in other areas Eg: FATE (Fairness Accountability Transparency Ethics) group at Microsoft Research

- Build spaces for collaboration like the AI now institute.

- Think harder on the ethics of classification

The ultimate question for fairness in machine learning is this.

Who is going to benefit from the system we are building? And who might be harmed?

Source: Datahub

Kate Crawford is a Principal Researcher at Microsoft Research and a Distinguished Research Professor at New York University. She has spent the last decade studying the social implications of data systems, machine learning, and artificial intelligence. Her recent publications address data bias and fairness, and social impacts of artificial intelligence among others.

Intelligent Machines Forget Killer Robots—Bias Is the Real AI Danger

John Giannandrea, who leads AI at Google, is worried about intelligent systems learning human prejudices.

… concerned about the danger that may be lurking inside the machine-learning algorithms used to make millions of decisions every minute.

The real safety question, if you want to call it that, is that if we give these systems biased data, they will be biased

The problem of bias in machine learning is likely to become more significant as the technology spreads to critical areas like medicine and law, and as more people without a deep technical understanding are tasked with deploying it. Some experts warn that algorithmic bias is already pervasive in many industries, and that almost no one is making an effort to identify or correct it.

Karrie Karahalios, a professor of computer science at the University of Illinois, presented research highlighting how tricky it can be to spot bias in even the most commonplace algorithms. Karahalios showed that users don’t generally understand how Facebook filters the posts shown in their news feed. While this might seem innocuous, it is a neat illustration of how difficult it is to interrogate an algorithm.

Facebook’s news feed algorithm can certainly shape the public perception of social interactions and even major news events. Other algorithms may already be subtly distorting the kinds of medical care a person receives, or how they get treated in the criminal justice system.

This is surely a lot more important than killer robots, at least for now.

Source: MIT Technology Review

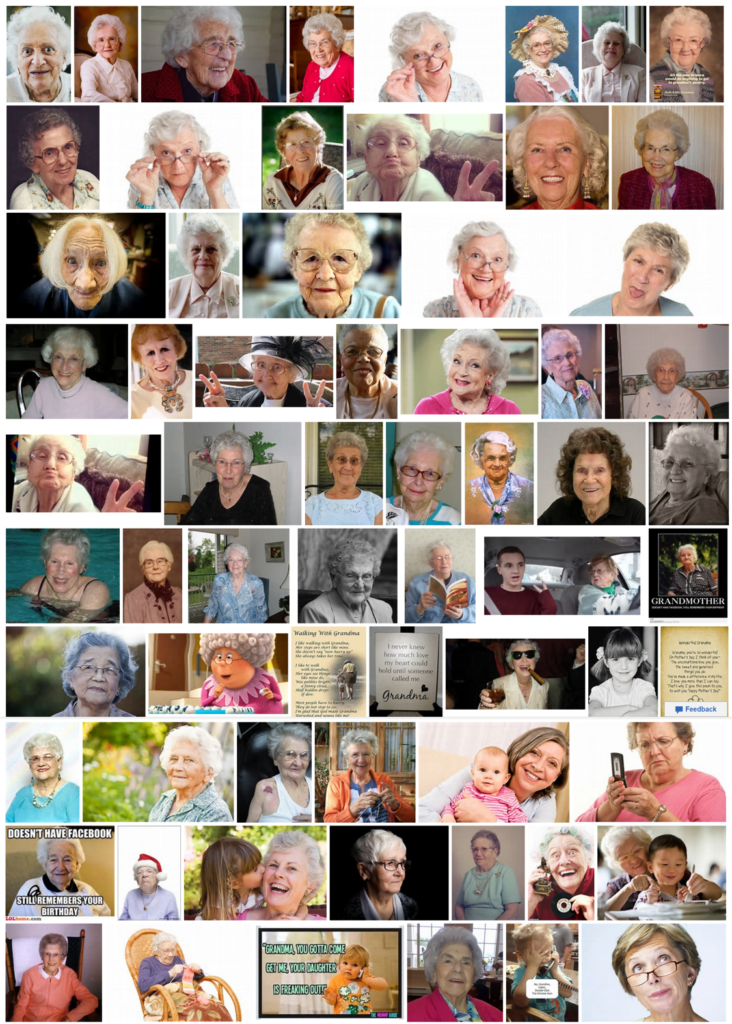

Grandma? Now you can see the bias in the data …

“Just type the word grandma in your favorite search engine image search and you will see the bias in the data, in the picture that is returned … you will see the race bias.” — Fei-Fei Li, Professor of Computer Science, Stanford University, speaking at the White House Frontiers Conference

Google image search for Grandma

Bing image search for Grandma

Artificial intelligence is quickly becoming as biased as we are

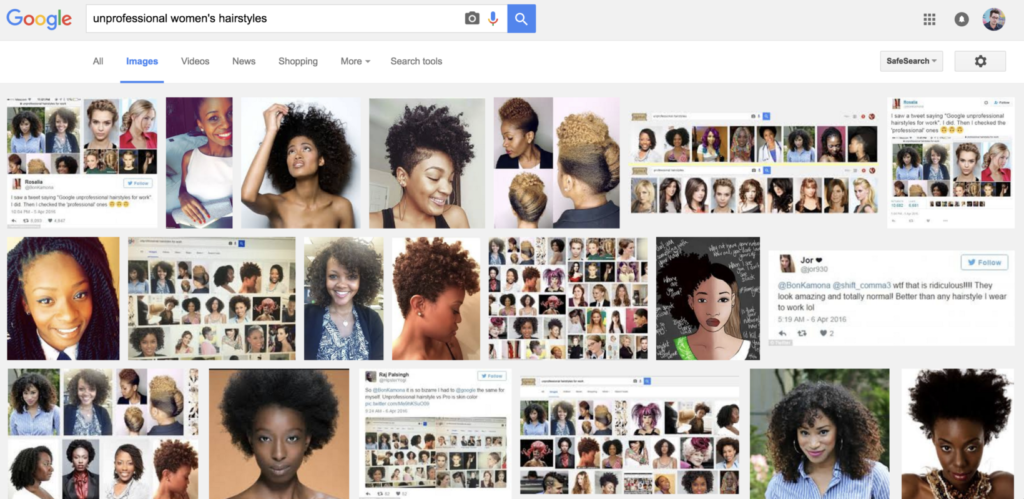

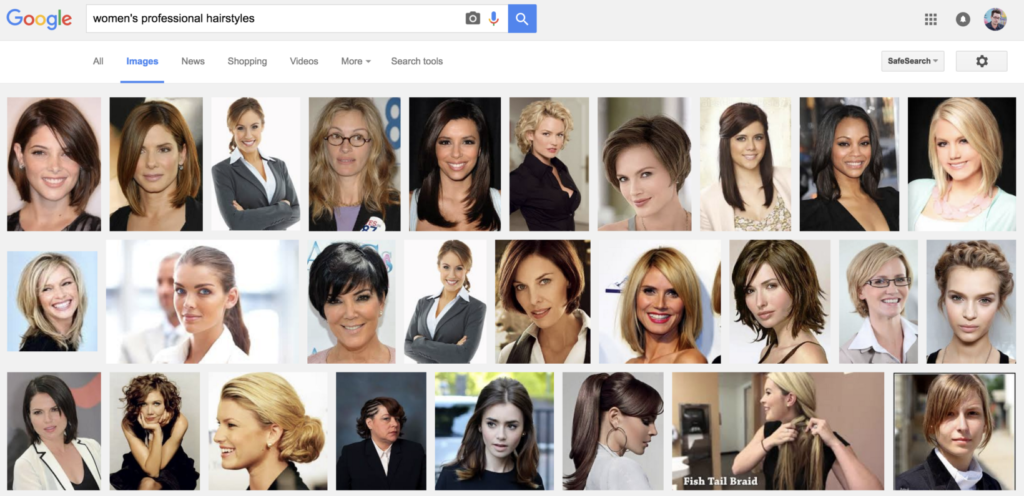

When you perform a Google search for every day queries, you don’t typically expect systemic racism to rear its ugly head. Yet, if you’re a woman searching for a hairstyle, that’s exactly what you might find.

A simple Google image search for ‘women’s professional hairstyles’ returns the following:

… you could probably pat Google on the back and say ‘job well done.’ That is, until you try searching for ‘unprofessional women’s hairstyles’ and find this:

It’s not new. In fact, Boing Boing spotted this back in April.

What’s concerning though, is just how much of our lives we’re on the verge of handing over to artificial intelligence. With today’s deep learning algorithms, the ‘training’ of this AI is often as much a product of our collective hive mind as it is programming.

Artificial intelligence, in fact, is using our collective thoughts to train the next generation of automation technologies. All the while, it’s picking up our biases and making them more visible than ever.

This is just the beginning … If you want the scary stuff, we’re expanding algorithmic policing that relies on many of the same principles used to train the previous examples. In the future, our neighborhoods will see an increase or decrease in police presence based on data that we already know is biased.

Source: The Next Web

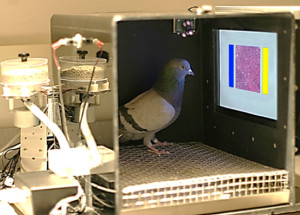

Pigeons diagnose breast cancer, could teach AI to read medical images

After years of education and training, physicians can sometimes struggle with the interpretation of microscope slides and mammograms. [Richard] Levenson, a pathologist who studies artificial intelligence for image analysis and other applications in biology and medicine, believes there is considerable room for enhancing the process.

“While new technologies are constantly being designed to enhance image acquisition, processing, and display, these potential advances need to be validated using trained observers to monitor quality and reliability,” Levenson said. “This is a difficult, time-consuming, and expensive process that requires the recruitment of clinicians as subjects for these relatively mundane tasks. “Pigeons’ sensitivity to diagnostically salient features in medical images suggest that they can provide reliable feedback on many variables at play in the production, manipulation, and viewing of these diagnostically crucial tools, and can assist researchers and engineers as they continue to innovate.”

“Pigeons do just as well as humans in categorizing digitized slides and mammograms of benign and malignant human breast tissue,” said Levenson.

Source: KurzwielAI.net