The study, which explored how robots can gain a human’s trust even when they make mistakes, pitted an efficient but inexpressive robot against an error prone, emotional one and monitored how its colleagues treated it.

The researchers found that people are more likely to forgive a personable robot’s mistakes, and will even go so far as lying to the robot to prevent its feelings from being hurt.

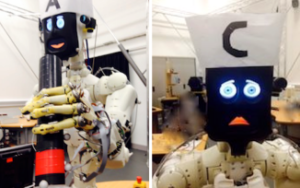

Researchers at the University of Bristol and University College London created an robot called Bert to help participants with a cooking exercise. Bert was given two large eyes and a mouth, making it capable of looking happy and sad, or not expressing emotion at all.

“Human-like attributes, such as regret, can be powerful tools in negating dissatisfaction,” said Adrianna Hamacher, the researcher behind the project. “But we must identify with care which specific traits we want to focus on and replicate. If there are no ground rules then we may end up with robots with different personalities, just like the people designing them.”

In one set of tests the robot performed the tasks perfectly and didn’t speak or change its happy expression. In another it would make a mistake that it tried to rectify, but wouldn’t speak or change its expression.

A third version of Bert would communicate with the chef by asking questions such as “Are you ready for the egg?” But when it tried to help, it would drop the egg and reacted with a sad face in which its eyes widened and the corners of its mouth were pulled downwards. It then tried to make up for the fumble by apologising and telling the human that it would try again.

Once the omelette had been made this third Bert asked the human chef if it could have a job in the kitchen. Participants in the trial said they feared that the robot would become sad again if they said no. One of the participants lied to the robot to protect its feelings, while another said they felt emotionally blackmailed.

At the end of the trial the researchers asked the participants which robot they preferred working with. Even though the third robot made mistakes, 15 of the 21 participants picked it as their favourite.

Source: The Telegraph